A somewhat tongue-in-cheek — somewhat, but not completely — high-level overview of the homeland I used to want to visit, with most emphasis on Denmark and some held in reserve for Sweden, in the

NY Post (by way of Bird Dog at Maggie’s Farm).

Let’s look a little closer, suggests Michael Booth, a Brit who has lived in Denmark for many years, in his new book, “The Almost Nearly Perfect People: Behind the Myth of the Scandinavian Utopia” (Picador).

Those sky-high happiness surveys, it turns out, are mostly bunk. Asking people “Are you happy?” means different things in different cultures. In Japan, for instance, answering “Yes” seems like boasting, Booth points out. Whereas in Denmark, it’s considered “shameful to be unhappy,” newspaper editor Anne Knudsen says in the book.

Moreover, there is a group of people that believes the Danes are lying when they say they’re the happiest people on the planet. This group is known as “Danes.”

:

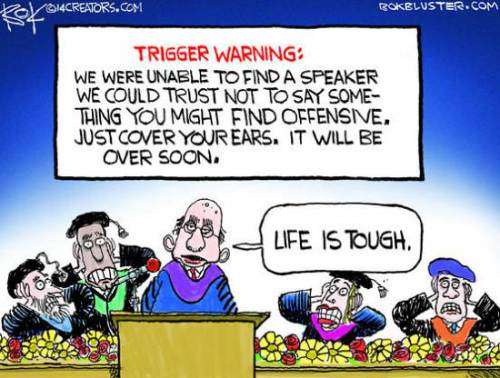

An American woman told Booth how, when she excitedly mentioned at a dinner party that her kid was first in his class at school, she was met with icy silence.One of the most country’s most widely known quirks is a satirist’s crafting of what’s still known as the Jante Law — the Ten Commandments of Buzzkill. “You shall not believe that you are someone,” goes one. “You shall not believe that you are as good as we are,” is another. Others included “You shall not believe that you are going to amount to anything,” “You shall not believe that you are more important than we are” and “You shall not laugh at us.”

:

In addition to paying enormous taxes — the total bill is 58 percent to 72 percent of income — Danes have to pay more for just about everything. Books are a luxury item. Their equivalent of the George Washington Bridge costs $45 to cross. Health care is free — which means you pay in time instead of money. Services are distributed only after endless stays in waiting rooms. Pharmacies are a state-run monopoly, which means getting an aspirin is like a trip to the DMV.

:

Scandinavia, as a wag in The Economist once put it, is a great place to be born — but only if you are average. The dead-on satire of Scandinavian mores “Together” is a 2000 movie by Sweden’s Lukas Moodysson set in a multi-family commune in 1975, when the groovy Social Democratic ideal was utterly unquestioned in Sweden.In the film’s signature scene, a sensitive, apron-wearing man tells his niece and nephew as he is making breakfast, “You could say that we are like porridge. First we’re like small oat flakes — small, dry, fragile, alone. But then we’re cooked with the other oat flakes and become soft. We join so that one flake can’t be told apart from another. We’re almost dissolved. Together we become a big porridge that’s warm, tasty, and nutritious and yes, quite beautiful, too. So we are no longer small and isolated but we have become warm, soft and joined together. Part of something bigger than ourselves. Sometimes life feels like an enormous porridge, don’t you think?”

Then he spoons a great glutinous glob of tasteless starch onto the poor kids’ plates. That’s Scandinavia for you, folks: Bland, wholesome, individual-erasing mush. But, hey, at least we’re all united in being slowly digested by the system.

The News Junkie follows along, and adds a link:

Naturally enough, the architecture of the welfare state was designed and developed with European realities in mind, the most important of which were European beliefs about poverty. Thanks to their history of Old World feudalism, with its centuries of rigid class barriers and attendant lack of opportunity for mobility based on merit, Europeans held a powerful, continentally pervasive belief that ordinary people who found themselves in poverty or need were effectively stuck in it — and, no less important, that they were stuck through no fault of their own, but rather by an accident of birth. The state provision of old-age pensions, unemployment benefits, and health services — along with official family support and other household-income guarantees — served a multiplicity of purposes for European political economies, not the least of which was to assuage voters’ discontent with the perceived shortcomings of their countries’ social structures through a highly visible and explicitly political mechanism for broadly based and compensatory income redistribution.

But America’s historical experience has been rather different from Europe’s, and from the earliest days of the great American experiment, people in the United States exhibited strikingly different views from their trans-Atlantic cousins on the questions of poverty and social welfare. These differences were noted both by Americans themselves and by foreign visitors, not least among them Alexis de Tocqueville, whose conception of American exceptionalism was heavily influenced by the distinctive American worldview on such matters. Because America had no feudal past and no lingering aristocracy, poverty was not viewed as the result of an unalterable accident of birth but instead as a temporary challenge that could be overcome with determination and character — with enterprise, hard work, and grit. Rightly or wrongly, Americans viewed themselves as masters of their own fate, intensely proud because they were self-reliant.

To the American mind, poverty could never be regarded as a permanent condition for anyone in any stratum of society because of the country’s boundless possibilities for individual self-advancement. Self-reliance and personal initiative were, in this way of thinking, the critical factors in staying out of need. Generosity, too, was very much a part of that American ethos; the American impulse to lend a hand (sometimes a very generous hand) to neighbors in need of help was ingrained in the immigrant and settler traditions. But thanks to a strong underlying streak of Puritanism, Americans reflexively parsed the needy into two categories: what came to be called the deserving and the undeserving poor. To assist the former, the American prescription was community-based charity from its famously vibrant “voluntary associations.” The latter — men and women judged responsible for their own dire circumstances due to laziness, or drinking problems, or other behavior associated with flawed character — were seen as mainly needing assistance in “changing their ways.” In either case, charitable aid was typically envisioned as a temporary intervention to help good people get through a bad spell and back on their feet. Long-term dependence upon handouts was “pauperism,” an odious condition no self-respecting American would readily accept.

Right, the local widow and the town drunk. Both without means, one through no fault of her own, after a lifetime of doing what she was supposed to do; the other one impoverished by choice.

Suffice it to say, the United States arrived late to the 20th century’s entitlement party, and the hesitance to embrace the welfare state lingered on well after the Depression. As recently as the early 1960s, the “footprint” left on America’s GDP by the welfare state was not dramatically larger than it had been under Franklin Roosevelt — or Herbert Hoover, for that matter. In 1961, at the start of the Kennedy Administration, total government entitlement transfers to individual recipients accounted for a little less than 5% of GDP, as opposed to 2.5% of GDP in 1931 just before the New Deal. In 1963 – the year of Kennedy’s assassination – these entitlement transfers accounted for about 6% of total personal income in America, as against a bit less than 4% in 1936.

During the 1960s, however, America’s traditional aversion to the welfare state and all its works largely collapsed. President Johnson’s “War on Poverty” and his “Great Society” pledge of the same year ushered in a new era for America, in which Washington finally commenced in earnest the construction of a massive welfare state. In the decades that followed, America not only markedly expanded provision for current or past workers who qualified for benefits under existing “social insurance” arrangements, it also inaugurated a panoply of nationwide programs for “income maintenance” (food stamps, housing subsidies, Supplemental Social Security Insurance, and the like) where eligibility turned not on work history but on officially designated “poverty” status. The government also added health-care guarantees for retirees and the officially poor, with Medicare, Medicaid, and their accompaniments. In other words, Americans could claim, and obtain, an increasing trove of economic benefits from the government simply by dint of being a citizen; they were now incontestably entitled under law to some measure of transferred public bounty, thanks to our new “entitlement state.”

The expansion of the American welfare state remains very much a work in progress; the latest addition to that edifice is, of course, the Affordable Care Act. Despite its recent decades of rapid growth, the American welfare state may still look modest in scope and scale compared to some of its European counterparts. Nonetheless, over the past two generations, the remarkable growth of the entitlement state has radically transformed both the American government and the American way of life itself. It is not too much to call those changes revolutionary.

:

By 2012, the most recent year for such figures at this writing, Census Bureau estimates indicated that more than 150 million Americans, or a little more than 49% of the population, lived in households that received at least one entitlement benefit. Since under-reporting of government transfers is characteristic for survey respondents, and since administrative records suggest the Census Bureau’s own adjustments and corrections do not completely compensate for the under-reporting problem, this likely means that America has already passed the symbolic threshold where a majority of the population is asking for, and accepting, welfare-state transfers.

It’s sad when a passion dies. My desire to visit the “fatherland” has evaporated for the most part, and I note that any need to do so seems to have vanished along with it. We’re all cooked now; what’s the necessity involved in visiting from one bowl of soft slimy porridge, to another?

What’s the solution? There has to be some sort of “uncooking.” That seems unrealistic when one views it from the porridge analogy. Which sadly fits, because once a grain of barley has been cooked and softened it’s no simple matter to get it firm and “flaky” again.

But, the problem was created by way of an errant, extremist, and therefore fragile, mindset. We imported from Europe the mindset that, when a woman at a dinner table shows pride in her son won first place in his school, she should be scorned. And, that a “fundamental transformation,” to coin a phrase, into a welfare state is some sort of laudable ambition for a head of that state to hold. So topsy-turvy is this Weltanschauung that the quickest way to get to it is to see any object or concept connected with it as the opposite of what it truly is. Excellence is to be mocked, independence scorned the way we are supposed to scorn crime. Reliance on public assistance is somehow the dream of a lifetime…and on and on down the line. Such a way of looking at things cannot endure without a lot of support, from within and without. Reality will not offer a helping hand. The solution to the problem is in there. Somewhere.

The point to all this, in my mind, is: The “victimology” complex, the “I’m poor because I was born that way” thing, is an offshoot of aristocratic stratification that existed over there, but never over here. We don’t have any business clinging to it, because we don’t have the historical underpinnings to support it. It’s been said that in America, you can be anything you want to be. That isn’t just a bumper-sticker slogan. It will never be reduced to an empty rhetorical nugget, some sort of laughable nullity, unless we allow it to be.

Obama did not even bother to attend a solidarity march for Paris that was held in Washington D.C. yesterday despite the participation of American officials like the State Department’s Victoria Nuland. “Obama wasn’t far from the march in D.C. on Sunday that wended silently along six blocks from the Newseum to the National Law Enforcement Officers Memorial,” Politico reported. “Instead, he spent the chilly afternoon a few blocks away at the White House, with no public schedule, no outings.”

Obama did not even bother to attend a solidarity march for Paris that was held in Washington D.C. yesterday despite the participation of American officials like the State Department’s Victoria Nuland. “Obama wasn’t far from the march in D.C. on Sunday that wended silently along six blocks from the Newseum to the National Law Enforcement Officers Memorial,” Politico reported. “Instead, he spent the chilly afternoon a few blocks away at the White House, with no public schedule, no outings.”

Mary D. Lewis, a professor who specializes in the history of modern France and has led opposition to the benefit changes, said they were tantamount to a pay cut. “Moreover,” she said, “this pay cut will be timed to come at precisely the moment when you are sick, stressed or facing the challenges of being a new parent.”

Mary D. Lewis, a professor who specializes in the history of modern France and has led opposition to the benefit changes, said they were tantamount to a pay cut. “Moreover,” she said, “this pay cut will be timed to come at precisely the moment when you are sick, stressed or facing the challenges of being a new parent.”

Fuck your trauma.

Fuck your trauma.

You have to wonder if these folks have actually read “A Christmas Carol” or spent any time pondering what Scrooge actually says and does. Because if you do, you come to realize that Scrooge more closely resembles a modern liberal than a conservative.

You have to wonder if these folks have actually read “A Christmas Carol” or spent any time pondering what Scrooge actually says and does. Because if you do, you come to realize that Scrooge more closely resembles a modern liberal than a conservative.